Is AI-Generated Code Safe? The Critical Role of Human Oversight

By Ahmed Elsayed on January 27, 2026

Is AI-Generated Code Safe? The Critical Role of Human Oversight

With the rise of tools like ChatGPT and GitHub Copilot, generating entire apps with a click has become possible. But for a startup founder, there is a fateful question to ask: "Is this code safe for my customers' data?"

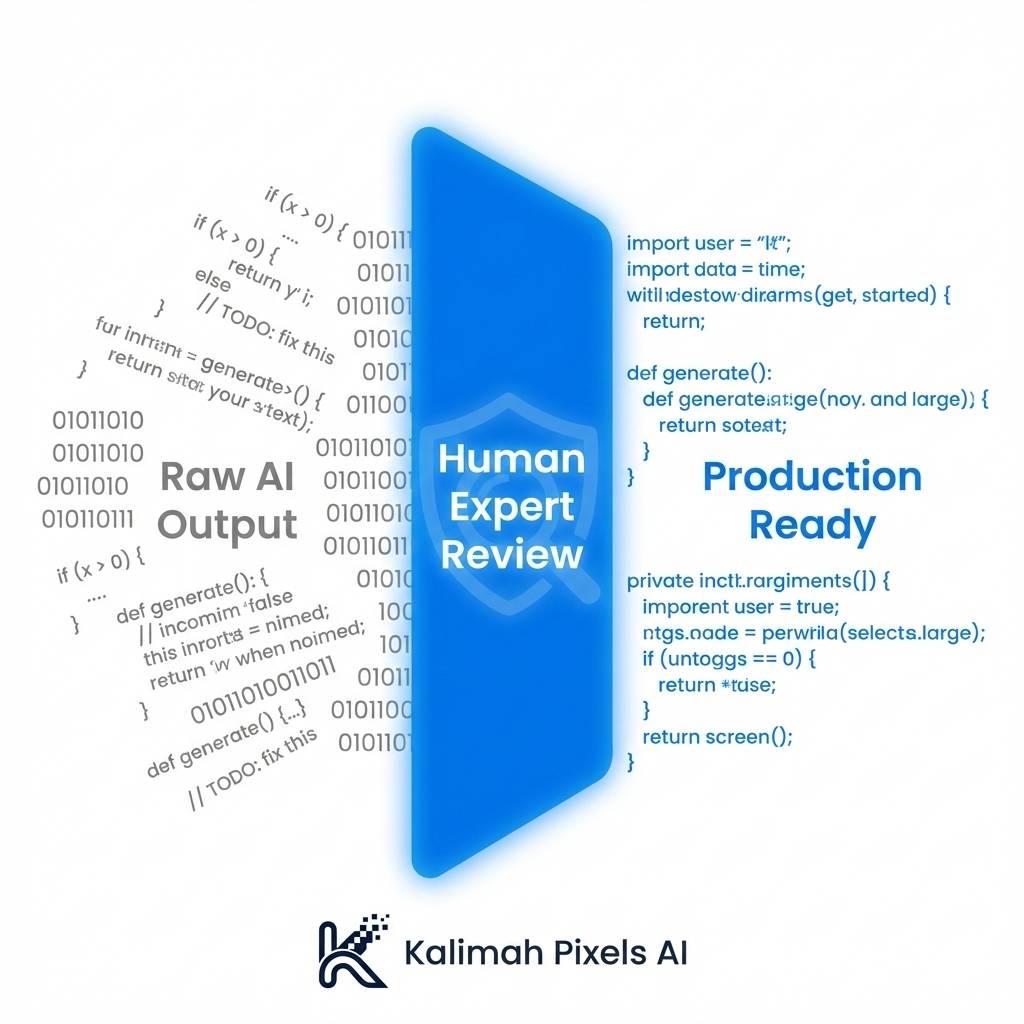

The short answer: Not always. AI is trained on billions of lines of code from the internet, including outdated or insecure patterns. Without an expert eye watching it, you might end up with catastrophic security vulnerabilities.

Why We Don't Rely on AI 100%

At Kalimah Pixels AI, we treat AI as a "Super Assistant," not the "Manager."

1. Code Hallucinations

Sometimes AI invents software libraries that don't exist or uses deprecated functions. Only an expert developer can spot these errors before they cause your app to crash in production.

2. Business Logic Nuances

AI knows how to write a "Pay Button," but it doesn't understand your specific financial compliance rules or complex user permission structures. This requires deep human understanding.

3. Security & Privacy

AI might write code that functions, but it often forgets to encrypt sensitive data or leaves backdoors open. Our security team reviews every line to ensure compliance with global safety standards.

Our Winning Formula

We use AI to accelerate the boring tasks (building UIs, basic data binding), saving 60% of the time. Our experts then spend the remaining 40% on performance optimization, security patching, and quality assurance.

The Bottom Line: Speed is great, but safety is non-negotiable. With us, you get the velocity of a bot and the wisdom of a human.